CivicLoon

On-device AI for understanding Minnesota legislation

I wanted three things from a side project. The AI had to run on the device. It had to work on every platform people actually use. And it had to solve a real problem in civic life, not a synthetic one.

CivicLoon is what came of that.

It reads Minnesota legislation and explains it back to you in plain English. Or Somali. Or Hmong. Or any of thirty languages. The model runs locally on whatever device you’re already on: phone, browser, or desktop.

No account. No login. Your reading stays with you.

Open civicloon.com

or grab it on Google Play / App Store

A loon is the Minnesota state bird. It is also what most people would call you for reading legislative finance reports recreationally.

So: this is for the loons. Civic ones.

The problem

Minnesota’s legislature processes thousands of bills a session.

Most of them are written in dense procedural prose: citations to other sections, subdivisions referencing subdivisions, the nested clause-soup that legislative drafters love and the rest of us don’t read.

The official tools assume you already speak legalese. The journalistic coverage only shows up if a bill is interesting enough to make the news. Almost nothing sits in the middle.

Minnesota also has more major language communities than the legislature publishes in. Thirty-something. Somali, Hmong, S’gaw Karen, Spanish, Arabic, Amharic, Oromo, Vietnamese, Russian, Ukrainian, on and on. The legislature ships exactly one English version of every bill.

Doesn’t have to be that way.

One codebase, every screen

CivicLoon is built on Kotlin Multiplatform with Compose Multiplatform for the UI.

The same Kotlin sources target Android, iOS, Windows, macOS, Linux, the browser through Kotlin/Wasm, and a Ktor server. Screens, state, and view models are shared across all of them. Platform splits only show up where they have to: native inference, file system, address geocoding, server data ingest.

The breadth matters. Civic tooling needs to meet people on whatever device they have. A five-year-old Android. A school iPad. A Linux laptop on a kitchen counter. CivicLoon ships to all of them from the same merge.

On-device by default

The defining constraint was that a tool for civic engagement cannot also be a surveillance product.

Anything you ping a server with leaks information. “This user just read bill HF 4823 about background checks.” Even if the ping is purely for “analytics,” it tells someone what politics live behind that screen.

So the AI runs on the device. The phone reads the bill, summarizes it, and forgets the conversation when you close the screen.

That choice pays four ways.

It’s private. The model is local. Nothing in the codebase needs your reading history to do its job. Privacy here isn’t something I have to defend; it’s how the thing is shaped.

The AI doesn’t need a network. The app keeps working in airplane mode, on a school Wi-Fi that blocks LLM APIs, on a five-year-old phone with flaky service. The network’s only job is fetching public bill data.

No per-token bill. There’s no inference meter running on my side. Every summary, every letter draft, every reading-level swap costs me what the user’s CPU and GPU were going to use anyway. That’s the only reason the app can be free.

It pays for features I couldn’t otherwise afford. Four reading levels of the same summary, generated on demand. Letter drafts in any tone, any position. And a one-time cloud translation that makes every bill available in thirty languages, cached forever. The kind of menu you can offer when none of the items cost you anything.

Two cloud touches are worth admitting to. Pretending otherwise wouldn’t be honest.

A small CPU-only classifier on the server tags each bill as it comes in. It runs on public legislative text, never user data.

The thirty-language translations run through NLLB and AfriNLLB on the cloud side. Translating thousands of public bills isn’t a phone-side job, and re-translating them on every device would be silly.

Neither path sees a user identity, an address, or what someone’s actually reading.

Inference about the bill is fine. Inference about you doesn’t leave the device.

Per-platform inference

The InferenceEngine interface lives in commonMain. Each client

target supplies its own actual implementation, picking whichever

runtime is sanest on that platform.

The server isn’t in the table because it doesn’t run the LLM path. (The bill-tagging classifier mentioned above is the server’s inference, and it’s a different shape of work.)

| Target | Runtime | Format |

|---|---|---|

| Android | llama.cpp via JNI | GGUF |

| iOS | mlx-swift-lm (Apple Silicon GPU) | MLX |

| Desktop (Windows, macOS, Linux JVM) | llama.cpp via JNI | GGUF |

| Web (Kotlin/Wasm) | @huggingface/transformers in a Worker | ONNX |

Each target runs whatever quantized small language model fits its silicon best. The choice can move as the open ecosystem moves.

iOS gets MLX because Apple’s stack is faster on Apple Silicon than a generic llama.cpp Metal build. Loading a model and streaming the first token is the single most expensive thing this app does, so the speed gap matters.

Android and desktop get llama.cpp because it’s the most mature path on those architectures, and the GGUF tooling around it is excellent.

Web gets ONNX through transformers.js because that’s the tool that actually runs in a browser today.

Two engines on iOS, in parallel

Apple’s two on-device LLM paths run on different silicon. CivicLoon uses both.

On iOS 26+, the FoundationModels framework exposes an on-device

LLM that runs on the Neural Engine. CivicLoon picks it up through

a SecondaryInferenceBackend.

The Neural Engine isn’t the GPU. It’s separate silicon. So MLX on the GPU and Foundation Models on the ANE can run at the same time without fighting each other.

InferenceQueue starts a second worker that pulls from the same

priority queue. The two engines run side by side. Effective

throughput roughly doubles.

Two models. One phone. No cross-talk.

The view-model code that asks for inference doesn’t care which

runtime is underneath. Tokens come back through the same

Flow<Token> on every platform.

Try it on this page

The demo below uses the same setup as CivicLoon’s web client: same loader, same worker pattern, model loaded on demand from Hugging Face.

The default text is a real clause from Minnesota’s 2023 free-school-meals law (HF 5, codified at Minn. Stat. 124D.111 subd. 1c).

Click run. The model downloads once, caches, and summarizes in your browser. The page never sends the input or output anywhere.

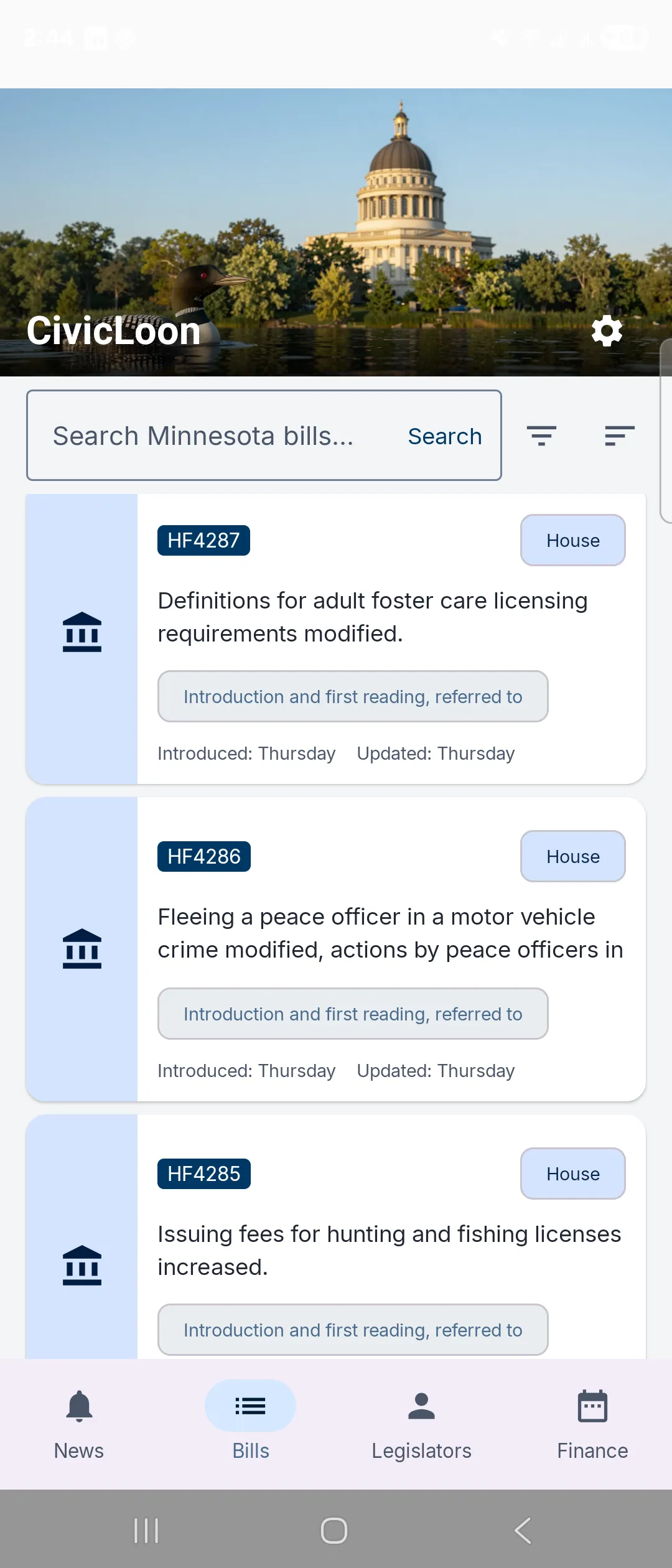

What ships

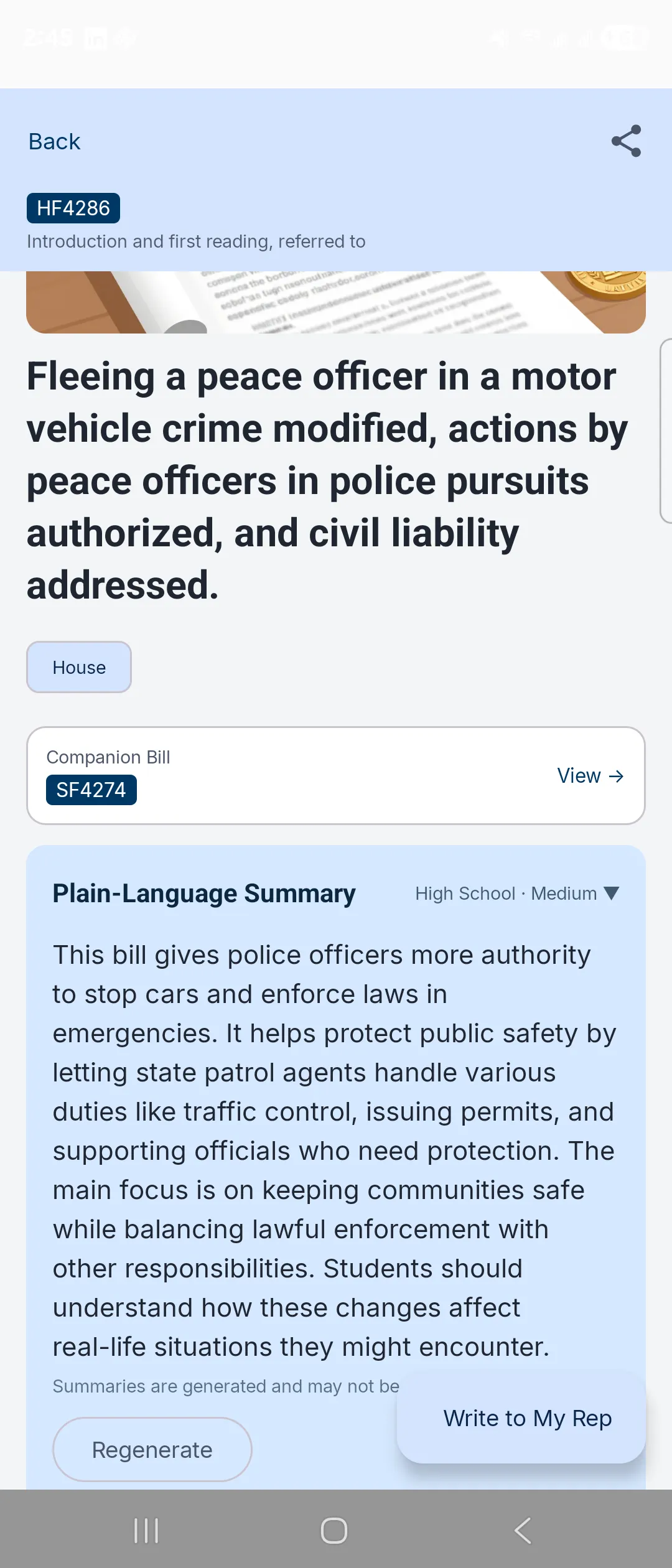

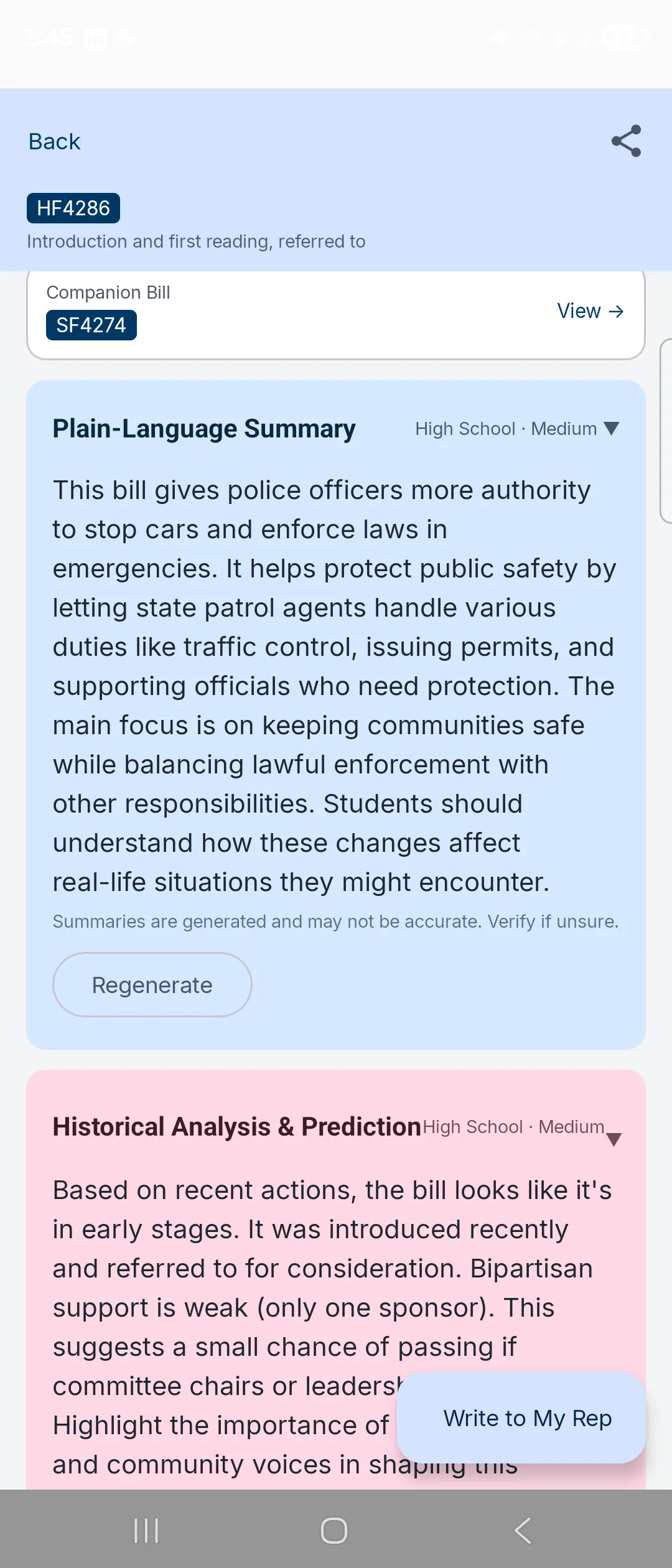

- Bill summaries in four reading levels. Standard, ELI5, High School, Professional. Same bill, different jargon density.

- AI letter drafting to your representatives. Pick a tone and a position; the model produces a draft you edit and send through your own email app.

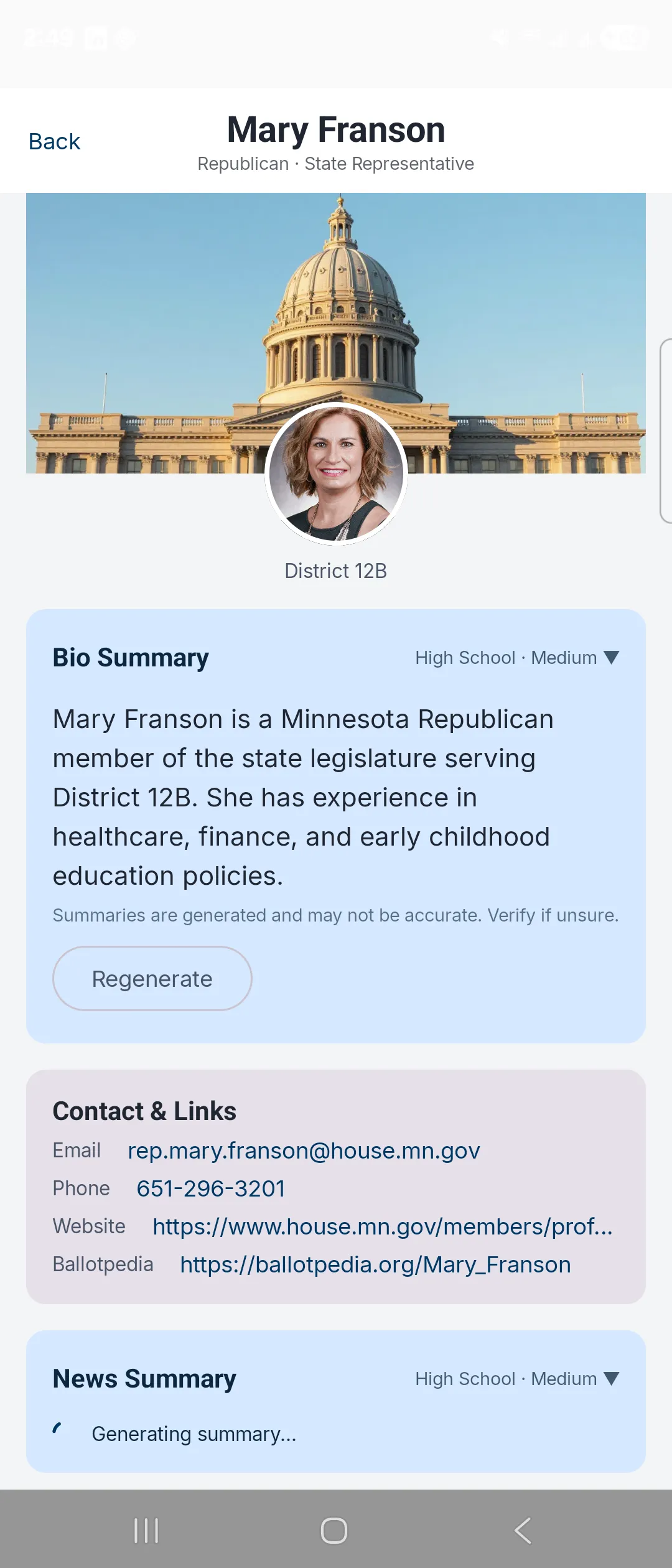

- Find your representatives by Minnesota address. House and Senate, with committee assignments.

- Campaign finance search across contribution records.

- Real-time bill news pulled in alongside the official text.

- Thirty languages, including Somali, Hmong, S’gaw Karen, Spanish, Arabic, Amharic, Oromo, Vietnamese, Russian, and Ukrainian.

- No required login. Reading history stays on your phone.

How it got built

Three weekends of side-project hours.

I’d been using coding agents at work for months. Mostly mobile, on familiar Compose Multiplatform and SwiftUI ground. The piece I hadn’t put real time into was Claude Code specifically, or, more honestly, the backend half of a real product.

I’m a mobile architect. I look at backend code only rarely, and writing it from scratch is a different muscle entirely. CivicLoon was the project that forced both.

Along the way I picked up Redis for the lookup caches, Kotlin Exposed plus PostgreSQL for the database layer, and Ktor for the server itself. Plus the surprisingly civilized experience of running tiny Azure container instances for hosting.

The Claude Code half went smoothly enough that the actual constraint kept becoming “how much new infrastructure can I learn before Sunday night.”

A few things became clear fast.

Coding agents are extraordinarily good at “you handle the boring parts” tasks: scaffold a screen, wire up a viewmodel, write the third copy-paste of a similar list adapter, set up the gradle module that always takes me an hour and a half.

They are bad at “this is subtle, here are the constraints, figure it out.” Knowing which kind of task you were holding turned out to be most of the skill.

Agents read the same code you do and reach completely different inferences from it. The job is knowing whose inference to ship. (The other one, eventually, is also yours.)

The other surprise was how much “just type at it confidently” eats your day. I caught myself, more than once, accepting a suggestion I didn’t fully understand because the project felt like it was moving.

Three weekends does not afford that.

So I started re-reading the diff before each commit, the way I would on a code review where I didn’t trust the author yet. Except the author was me, twenty minutes ago.

If you can’t explain what you just committed to a colleague, you haven’t read it carefully enough. The fact that an agent wrote it is your problem, not the colleague’s.

Press

- MinnPost. “New app using AI aims to expand civic engagement in Minnesota” by Ellen Schmidt (April 21, 2026). The piece was picked up by the Associated Press and ran in regional outlets across the country, including Eden Prairie Local News, Sun This Week, and KIMT.

I built the thing to learn an instrument. The instrument turned out to play loud enough that the AP wire picked up the signal.

Not the outcome I’d planned. Welcome anyway.

Screenshots